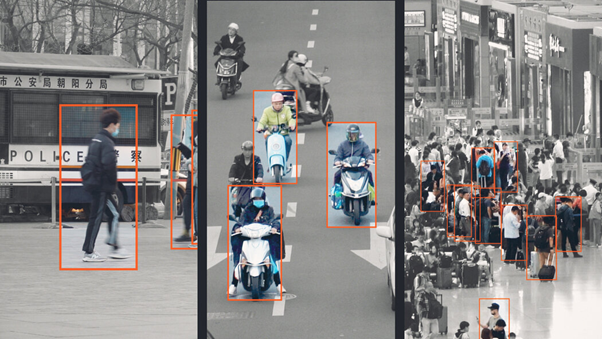

Image illustrating Mass Surveillance in China

AUTHOR: Sara B. Gero

In the age of artificial intelligence, the right to privacy is increasingly under threat. Governments across the world are using artificial intelligence to repress our right to protest, for example, by identifying protesters through facial recognition software. The use of AI tools has particularly harmful impacts on marginalised communities. Migrants are often exempted from regulatory protections, including the European Union’s AI Act, as well as treated as ‘testing grounds’ for controversial new technologies such as biometric identification. States often abuse national security and border control to create regulatory loopholes that lead to human rights abuses. Some forms of AI surveillance must be banned altogether, including highly invasive spyware.

Surveillance and marginalised groups

Many countries have weaponised AI tools among immigrants, LGBTQ+ activists, women, and human rights defenders. One of the most popular AI spywares is the Israeli software Pegasus. It has been used in El Salvador, Morocco, and Serbia, for example, to target journalists critical of their respective governments. This spyware is used so widely because it is one of the most comprehensive ones, which can access everything the target is doing on their phone. In cases such as these, simple oversight might not be enough to solve the issue, and the only way forward might be to ban them altogether, as even in their ordinary operations, they infringe on human rights.

Furthermore, AI tools also affect the right to assembly and the right to equal treatment under the law. In the Philippines, the government has weaponised digital tools, misinformation and a flawed anti-terrorism law to create fear and intimidate human rights defenders. In Thailand, women and LGBTQ+ activists have been targeted with digital surveillance, including the Pegasus spyware, by both state and non-state actors in an effort to silence them. In the United States, AI facial recognition cameras have been known to identify individuals as suspicious based on whether they spoke a different language or wore culturally distinctive clothing. The racial bias that is, at this time, intrinsic to all facial recognition AI models is widely known, but governments and police forces have chosen to willfully ignore this. One such example is that the New York Police Department stopped tracking facial recognition accuracy in 2015 because the error rate was too high. The US government is also known to use AI tools that enable constant mass monitoring, surveillance, often for the purpose of targeting non-citizens. The fact that the issue spreads across continents, social groups, and situations shows that the issue is systemic, and thus legislative solutions are a must.

The way forward

It is clear that AI poses a threat to human rights, including the right to assembly and the right to privacy, but few governments are willing to sacrifice profit to protect these rights. The European Union has adopted the AI Act, which seeks to limit these abuses to a certain extent, but it seems that its interest in protecting human rights does not extend beyond the borders of the Union, as they have not made any attempt to limit the export of harmful AI technologies from the EU. This is not due to the legislator’s ignorance, as it is widely known that companies based in the EU are providing rights-violating technologies to government who use them to oppress marginalised communities. A clear example of this is a digital surveillance system produced by Dutch, French and Swedish companies that has been used in China’s mass surveillance programs targeting Uyghurs, and thus aiding the Chinese government’s ethnic cleansing.

Overall, legislation on AI surveillance must be comprehensive and clear in not facilitating human rights abusers. Moreover, there should be no exemptions from AI regulation under the guise of migration control, as this can quickly lead to racial profiling and discriminatory practices.

Sources:

Amnesty International. (2022, August 8). EU companies selling surveillance tools to China’s human rights abusers. https://www.amnesty.org/en/latest/press-release/2020/09/eu-surveillance-sales-china-human-rights-abusers/

Amnesty International. (2023, August 10). The Pegasus Project: One year on, spyware crisis continues after failure to clamp down on surveillance industry. https://www.amnesty.org/en/latest/news/2022/07/the-pegasus-project-one-year-on-spyware-crisis-continues-after-failure-to-clamp-down-on-surveillance-industry/

Amnesty International. (2023, December 6). EU: Lawmakers reluctant to stop EU companies profiting from surveillance and abuse through the AI Act. https://www.amnesty.org/en/latest/news/2023/12/eu-lawmakers-reluctant-to-stop-eu-companies-profiting-from-surveillance-and-abuse-through-the-ai-act/

Amnesty International. (2024, January 5). India: Damning new forensic investigation reveals repeated use of Pegasus spyware to target high-profile journalists. https://www.amnesty.org/en/latest/news/2023/12/india-damning-new-forensic-investigation-reveals-repeated-use-of-pegasus-spyware-to-target-high-profile-journalists/

Amnesty International. (2024, January 29). The urgent but difficult task of regulating artificial intelligence. https://www.amnesty.org/en/latest/campaigns/2024/01/the-urgent-but-difficult-task-of-regulating-artificial-intelligence/

Amnesty International. (2025, March 27). Serbia: Technical Briefing: Journalists targeted with Pegasus spyware – Amnesty International. https://www.amnesty.org/en/documents/eur70/9186/2025/en/

Amnesty International. (2025, November 24). USA: Amnesty International, S.T.O.P. lawsuit reveals NYPD surveillance abuses. https://www.amnesty.org/en/latest/news/2025/11/amnesty-and-s-t-o-p-reveal-nypd-surveillance-abuses/

Amnesty International. (2026, January 29). Impact of digital and AI-Assisted surveillance on assembly and association rights, including chilling effects: Submission to the Special Rapporteur on Freedom of Peaceful Assembly and of Association – Amnesty International. https://www.amnesty.org/en/documents/ior40/0484/2025/en/

Source(s) image(s):

Mozur, P., Xiao, M., & Liu, J. (2022, July 4). ‘Una jaula invisible’: así es como China vigila el futuro. The New York Times. https://www.nytimes.com/es/2022/07/04/espanol/china-vigilancia.html